Genomics and epigenomics maneuvers today in a data deluge. Mapping and sequencing the genome has become common, and it produces now not gigabytes, not terabytes, but petabytes of data. Indeed, genomics was one of the disciplines in which the change of scale that characterizes ”big data” first occurred. The production, management and use of this kind and scale of data require a whole new labor organization. It generates new and unexpected consequences that are at the same time social and scientific, and even changes the nature of scientific discovery in genomics.

It is the aim of this symposium to bring together social and scientific questions around big data in genomics and epigenomics. For example, possible infrastructures for the data have different effects in terms of security for patients and liability of doctors; infrastructure must be socially decided upon. Many databases are produced in a completely decentralized manner. To articulate them, it is necessary to standardize the production of data. But under which authority? A core aim of these data is to allow a “personalized” medicine, i.e. a medicine that could be specific to the characteristics of one single patient. Technically, how does this affect the classical statistical tools used in medicine, given that it seems to contradict radically their foundation on homogeneous groups and on the notion inference from a sample? On a completely different level, what is a very “person” when it is defined only by its genome? Is a genome enough to define health and disease? Are these persons cured and/or exposed by the capture of their data?

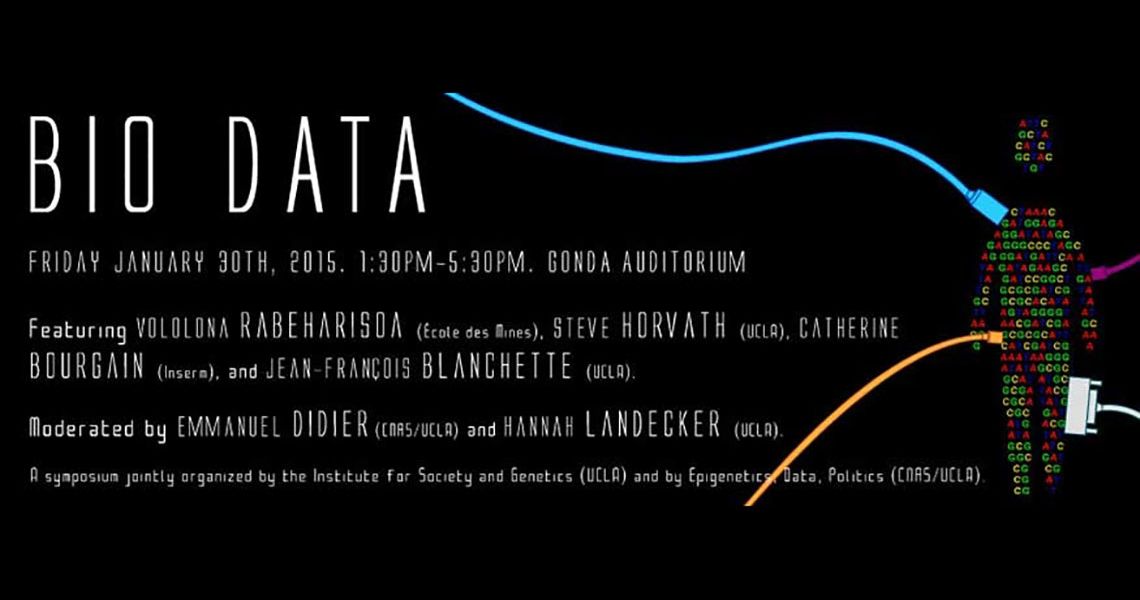

The iceberg of data on the tip of which genomics sits is often overlooked, but it is in fact a key actor influencing the future political effects of this discipline. This symposium brings together four distinguished speakers from the life and social sciences to address the political and scientific life of big data in genomics.